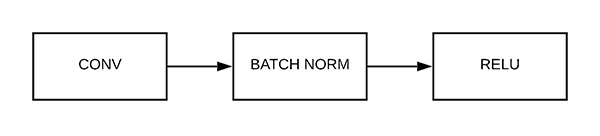

How easy this looks compared to when we had to construct a model through the class way of doing it. We can compose convolutions with maxpooling operations with ReLU activation functions with flatten operations and what this does is it allows us to build our models in a sequential. The main takeaway here is that having all of these functions wrapped up as neural network models allows us to use the sequential class to wrap multiple modules and compose them together. Torch.nn.functional as F allows us to create sequential models and by having the ability to define our layers, our activation functions, our flatten operations are pulling operations. we can compose any neural network model together using the Sequential model this means that we compose layers to make networks and we can even compose multiple networks together. PyTorch sequential model is a container class or also known as a wrapper class that allows us to compose the neural network models. Sequential class constructs the forward method implicitly by sequentially building network architecture. The cool thing is that Pytorch has wrapped inside of a neural network module itself. Most of the operations are in the neural network functional API. Create a ModelĪt this point, you may be wondering about operations like activation functions and pooling operations and flattening operations. Now ready to take this information and use it to build our first sequential model. So that was in order to allow the image to be plotted. The only thing to do here is that normalize this particular image. Let just use the pyplot to show this image. We’ll inspect the shape of the image which is 3x32x32 the height and width are 32 and this is a color image. Test_ds_loader=(test_ds,batch_size=32,shuffle=False,num_workers=2)įrom the trainset, we’re gonna access the first element which is going to be a tuple that contains our image tensor and our labels tensor.

Test_ds=10(root='./data',train=False,download=True,transform=transform) Train_ds_loader=(train_ds,batch_size=32,shuffle=True,num_workers=2) train_ds=10(root='./data',train=True,download=True,transform=transform) Using torchvision, it’s extremely easy to load CIFAR10. We transform them to Tensors of normalized range. The output of torchvision datasets are PIL images of range.

Pytorch has created a package called torchvision, that has data loaders for common datasets such as Imagenet, CIFAR10, MNIST, 3-channel color images of 32×32 pixels in size. It has the 10 classes.The images in CIFAR-10 are of size 3x32x32, i.e. The next thing we’re gonna do is create a dataset to train because we are not only construct a sequential model but we also train a sequential model.įor this tutorial, we will use the CIFAR10 dataset. We’re gonna import all the stuff that we need.

The First thing we’re gonna do is to start with our imports. In this tutorial, we’re gonna learn “How to use PyTorch Sequential class to build ConvNet”. This makes it much easier for us to rapidly build neural networks and skip over the part where we have to implement them forward() function this is because the sequential class implements the forward() function for us. The Sequential class allows us to build neural networks on the fly without having to define an explicit class.